Key Takeaways

- Neural networks can serve as effective and computationally efficient surrogates for slow, complex Agent-Based Models (ABMs) used in burn wound healing research.

- The Spatio-temporal Attention Long Short-Term Memory (STA-LSTM) architecture demonstrated the best overall performance, accurately predicting both the spatial distribution and temporal dynamics of key immune-system markers (cytokines).

- While theoretically promising, incorporating physical laws via a Physics-informed Neural Network (PINN) did not outperform specialized recurrent architectures like STA-LSTM for this specific regression task.

TL;DR

Severe burns trigger a complex immune response that can lead to life-threatening complications. To understand this process, researchers use powerful but extremely slow computer simulations called Agent-Based Models (ABMs). The significant time and computational power required to run these detailed simulations make them impractical for real-time clinical applications, creating a need for faster predictive tools that can forecast the wound's healing trajectory. This study investigates using various neural networks as fast 'surrogates' to mimic the ABM's output. The researchers trained different architectures, including several types of Long Short-Term Memory (LSTM) networks and a Physics-Informed Neural Network (PINN), to predict cytokine levels in a burn wound. Their key contribution is demonstrating that a Spatio-temporal Attention LSTM (STA-LSTM) model most accurately and efficiently predicts the healing process, outperforming the other models.

Why Does It Matter?

This paper provides a valuable blueprint for overcoming the computational bottleneck of complex biological simulations. By showing that surrogate models, particularly STA-LSTM, can rapidly and accurately predict outcomes, it paves the way for integrating sophisticated models into time-sensitive research and potential clinical decision-support tools. This work accelerates the translation of computational insights into practical applications for complex biological processes like wound healing.

ABM Bottleneck

The core 'ABM Bottleneck' identified in the paper is the immense computational expense and time-consuming nature of high-fidelity agent-based models (ABMs). While ABMs offer unparalleled detail in simulating the complex immune response to burns, this computational burden makes them impractical for rapid clinical decision-making. The research directly confronts this limitation by developing various neural networks as lightweight surrogate models. These surrogates are trained to mimic the behavior of the complex ABM, enabling them to generate predictions for cytokine concentrations almost instantly. This approach effectively bypasses the simulation bottleneck, offering a pathway to translate the deep insights from detailed biological models into a computationally efficient and clinically viable tool for burn healing predictions.

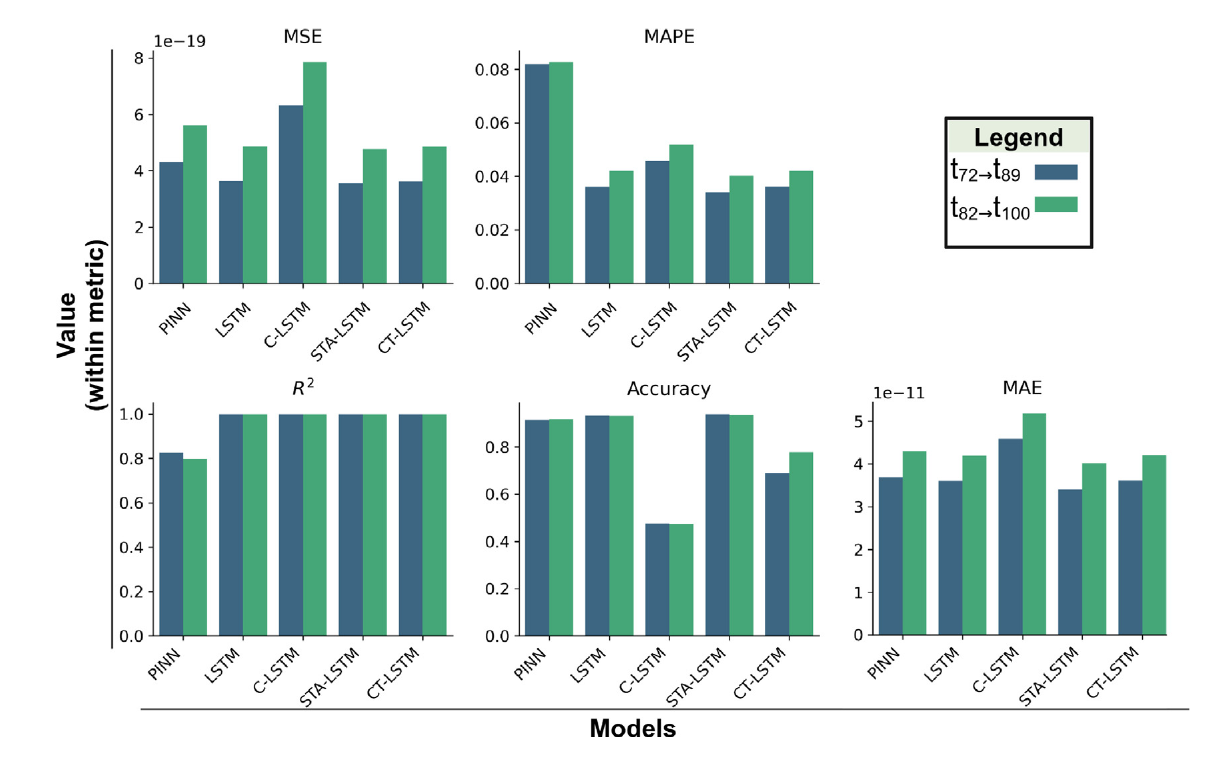

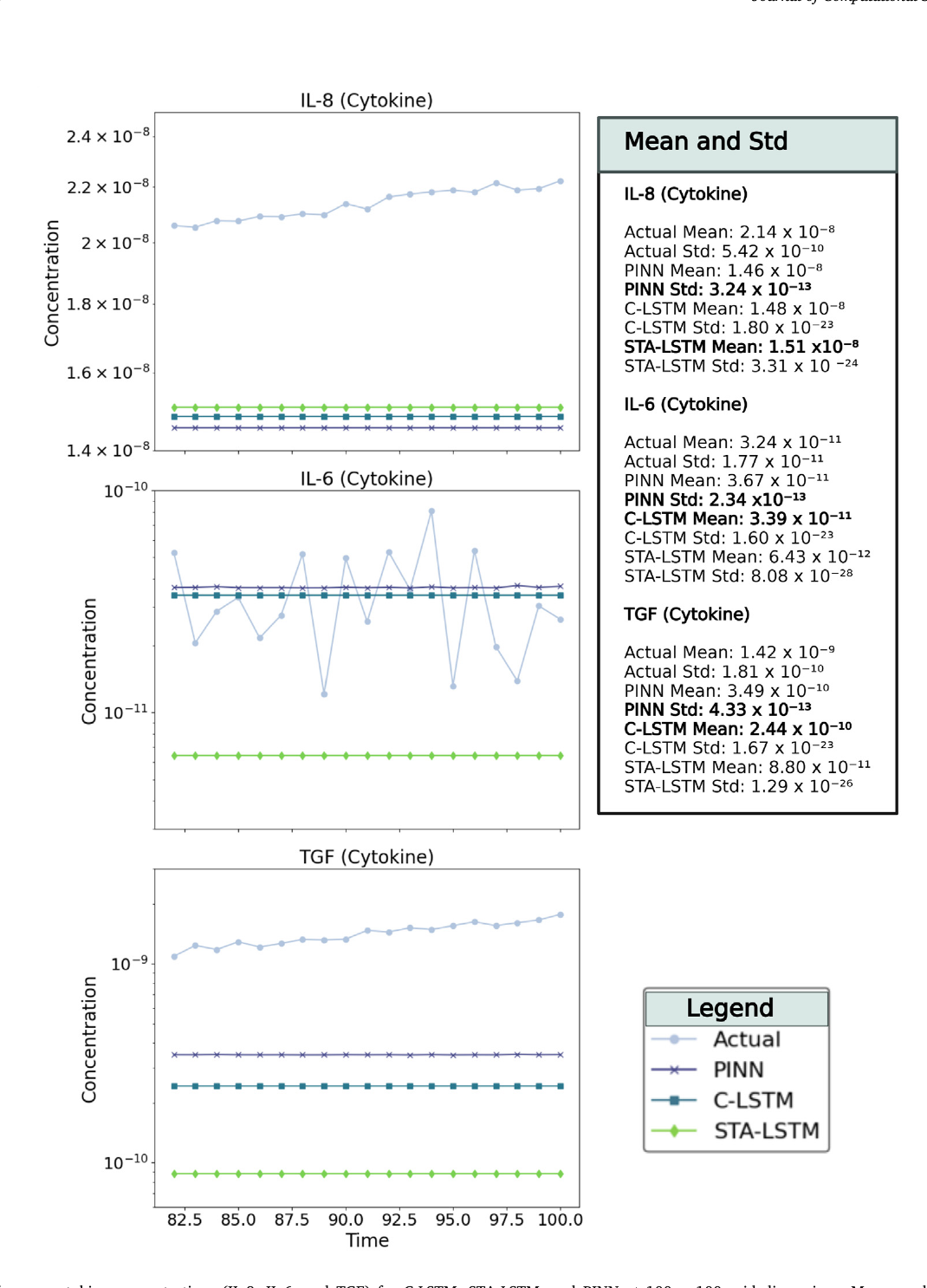

NN Model Zoo

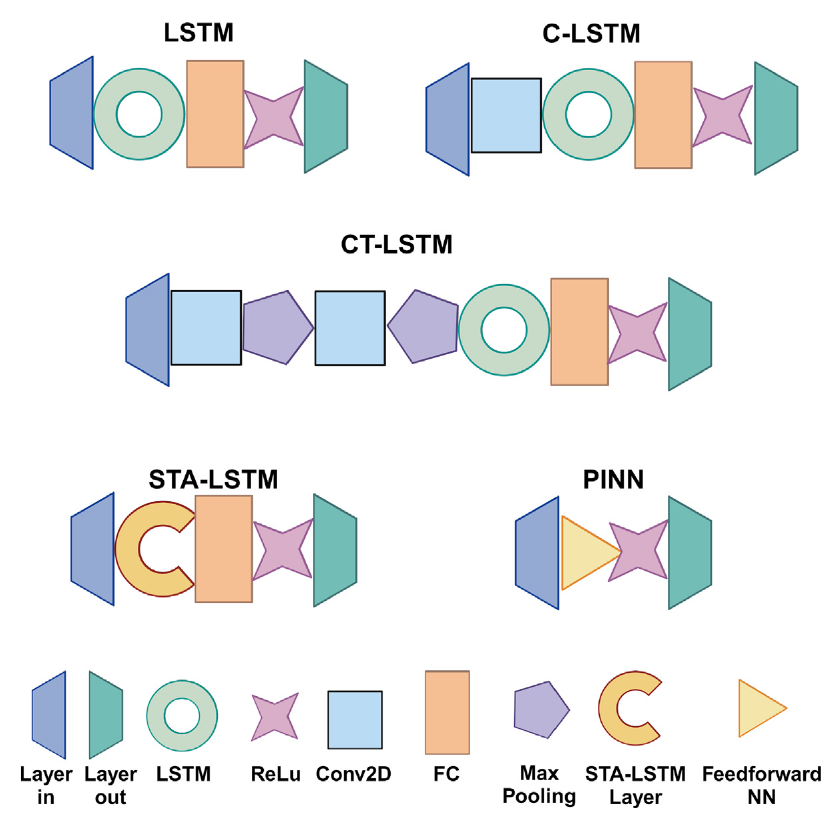

The paper's 'NN Model Zoo' represents a thoughtful, structured exploration of diverse neural network architectures designed to serve as fast surrogates for a complex agent-based burn wound model. This collection isn't arbitrary; it systematically investigates how different architectural components handle the intricate spatio-temporal data of cytokine dynamics. The zoo ranges from a standard LSTM baseline to hybrid models incorporating convolutions and, most effectively, attention mechanisms. The analysis crucially reveals that the Spatio-Temporal Attention LSTM (STA-LSTM) achieves the best overall statistical performance, underscoring the power of attention in deciphering complex biological patterns. However, the inclusion of a Physics-Informed Neural Network (PINN) introduces a fascinating dichotomy, trading some statistical accuracy for enforced physical consistency. This comparison insightfully demonstrates that there is no universal 'best' model; instead, it presents a nuanced trade-off between maximizing predictive accuracy and ensuring biophysical plausibility.

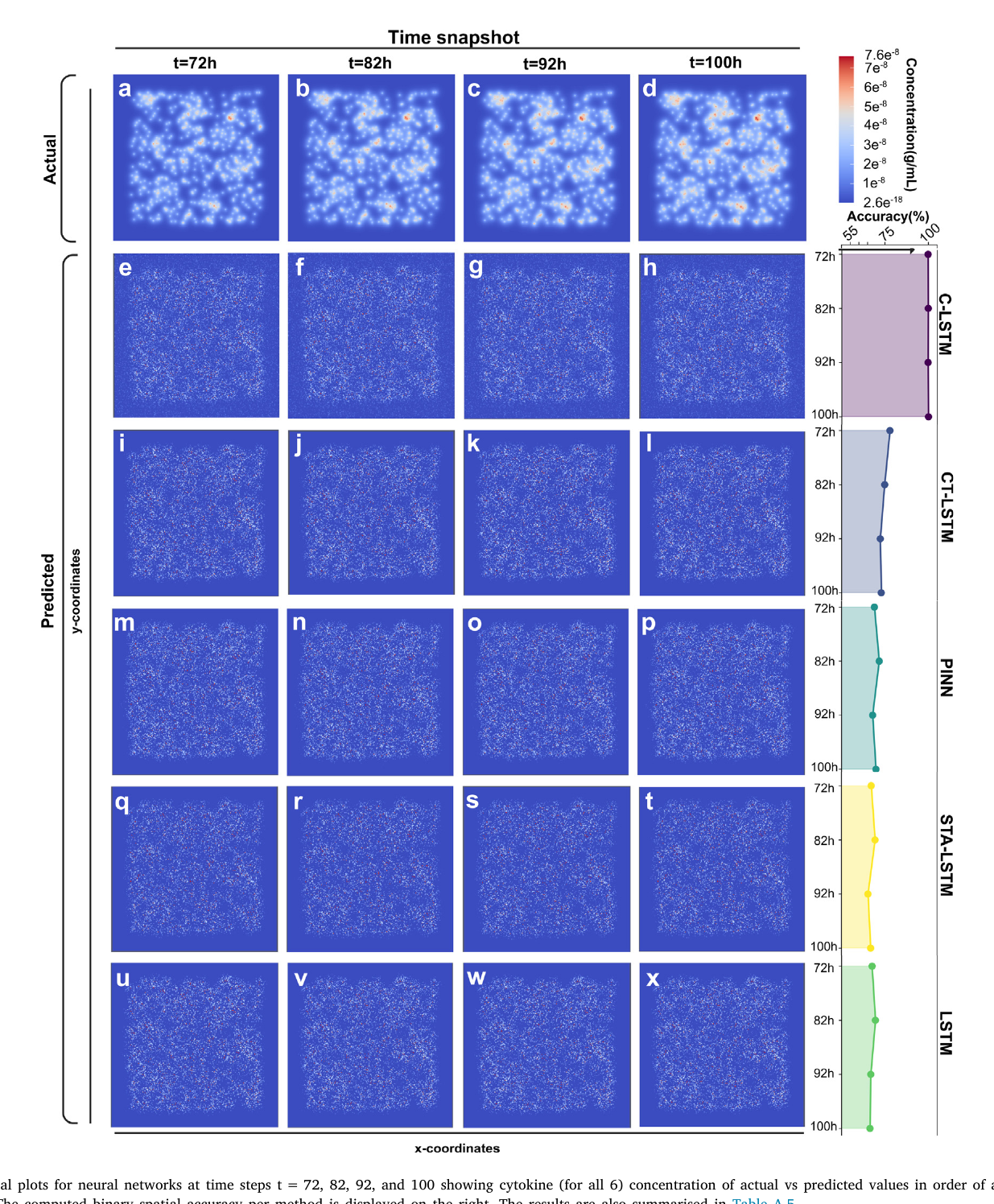

Spatial vs Stats

This study reveals a critical dichotomy in predictive modeling: achieving high statistical accuracy versus faithfully reproducing spatial patterns. While conventional metrics like R² and MSE gauge overall performance, they can mask a model's failure to capture the essential spatial distribution of biological markers like cytokines. The analysis shows that certain models might capture aggregate statistical trends but fail to generate accurate spatial maps, limiting their biological utility. Conversely, spatiotemporally-focused models might show promise spatially but lag in statistical rigor. The research champions the STA-LSTM architecture as a solution that bridges this divide, excelling in both statistical benchmarks and the reconstruction of complex spatial dynamics, thus offering a more complete and insightful surrogate for the intricate healing process.

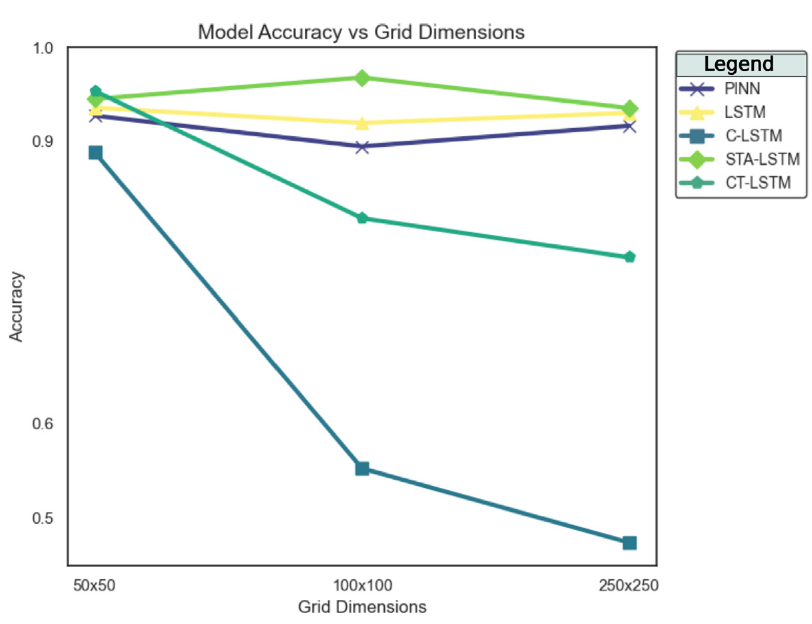

Scalability Woes

The analysis uncovers significant scalability woes for certain neural network surrogates. While aiming to replace computationally heavy Agent-Based Models, the study reveals that models incorporating convolutional layers, specifically C-LSTM and CT-LSTM, fail disastrously at scale. They either crash during training on larger grids (500x500) or their accuracy plummets, indicating an inability to handle the increased complexity of spatial dependencies. This highlights a critical limitation, as the utility of a surrogate model is tied to its performance on realistic, high-resolution simulations. In stark contrast, the STA-LSTM and PINN architectures demonstrate superior scalability, maintaining high prediction accuracy. This finding is crucial, emphasizing that the architectural choice is paramount for creating practical, scalable models for complex biological systems.

Next: GRUs, PINNs

Considering the paper's analysis of surrogate models for burn wound healing simulations, a future direction centered on 'GRUs and PINNs' represents a highly strategic refinement. Investigating Gated Recurrent Units (GRUs) would be a logical next step to optimize computational efficiency. As a less complex alternative to the LSTMs tested, GRUs could offer a superior trade-off between predictive accuracy and reduced computational demand. Concurrently, advancing the Physics-Informed Neural Network (PINN) framework indicates a push towards enhanced scientific plausibility. Deeper integration of the underlying biological diffusion equations would create a more robust model that not only predicts temporal trends but also respects physical laws, improving its generalization and trustworthiness beyond mere data-fitting.

More Figures (4)