A lot of causal discovery starts with a tidy picture of the world. One variable affects another. That one affects a third. The arrows move forward, and never circle back. That picture is often useful. But it is also an assumption.

Our paper, Park et al. (2025), starts from a simple point: if feedback is part of the system, then ruling out cycles from the start may already put the wrong structure on the problem.

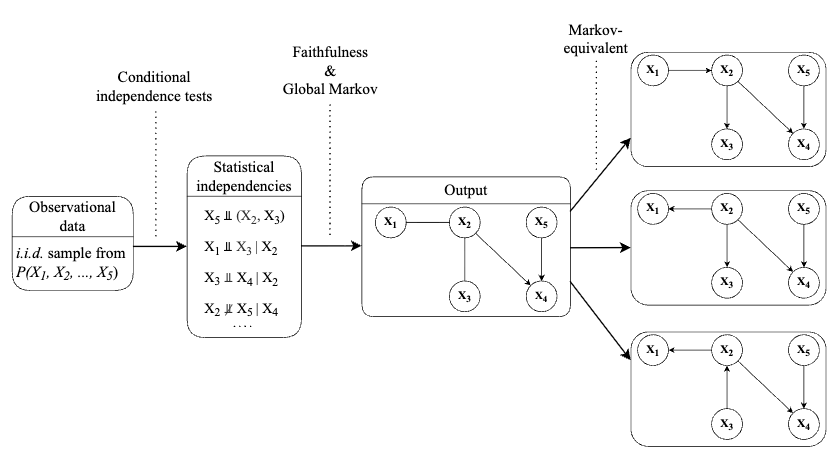

The paper introduces cyclic causal discovery in an accessible way and compares three methods designed for that setting: CCD, FCI, and CCI. The goal is not to claim that cyclic methods somehow solve causal inference. It is to ask a more basic question: what changes once we allow the possibility that variables can be both causes and consequences of one another?

The answer is: quite a lot.

From one graph to many

Once cycles are allowed, causal discovery becomes less tidy. Conditional independence patterns no longer map as cleanly onto direct causal relations. Instead of recovering one neat graph, these methods often recover a broader equivalence class: multiple graphs that fit the same observed dependence structure.

Honesty about uncertainty

But that is not a weakness so much as honesty. If the system itself contains feedback, then the method should be allowed to represent feedback too. Otherwise, acyclicity stops being a convenience and becomes a distortion.

That is the broader message of the paper. Causal discovery is not only about extracting arrows from data. It is also about deciding what kinds of structures your method is even allowed to see.